|

AlexaClientSDK

3.0.0

A cross-platform, modular SDK for interacting with the Alexa Voice Service

|

|

AlexaClientSDK

3.0.0

A cross-platform, modular SDK for interacting with the Alexa Voice Service

|

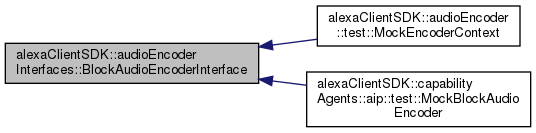

Block audio encoder interface. More...

#include <BlockAudioEncoderInterface.h>

Public Types | |

| using | Byte = unsigned char |

| Byte data type. More... | |

| using | Bytes = std::vector< Byte > |

| Byte array data type for encoding output. More... | |

Public Member Functions | |

| virtual bool | init (avsCommon::utils::AudioFormat inputFormat)=0 |

| Pre-initialize the encoder. More... | |

| virtual size_t | getInputFrameSize ()=0 |

| virtual size_t | getOutputFrameSize ()=0 |

| Provide maximum output frame size. More... | |

| virtual bool | requiresFullyRead ()=0 |

| Return if input must contain full frame. More... | |

| virtual avsCommon::utils::AudioFormat | getAudioFormat ()=0 |

| Return output audio format. More... | |

| virtual std::string | getAVSFormatName ()=0 |

| AVS format name for encoded audio. More... | |

| virtual bool | start (Bytes &preamble)=0 |

| Start the encoding session. More... | |

| virtual bool | processSamples (Bytes::const_iterator begin, Bytes::const_iterator end, Bytes &buffer)=0 |

| Encode a block of audio. More... | |

| virtual bool | flush (Bytes &buffer)=0 |

| Flush buffered data if any. More... | |

| virtual void | close ()=0 |

| Close encoding session. More... | |

| virtual | ~BlockAudioEncoderInterface ()=default |

Block audio encoder interface.

Block audio encoder provides a generic interface for converting audio stream data. The encoding is performed within a session that is started with init() call and ends with close().

After initialization block audio encoding has the following stages:

After call to close() the encoder instance can be reused for another encoding operation.

| using alexaClientSDK::audioEncoderInterfaces::BlockAudioEncoderInterface::Byte = unsigned char |

Byte data type.

| using alexaClientSDK::audioEncoderInterfaces::BlockAudioEncoderInterface::Bytes = std::vector<Byte> |

Byte array data type for encoding output.

|

virtualdefault |

Destructor.

|

pure virtual |

Close encoding session.

Notify end of the session. Any backend library then may be deinitialized so it cleans memory and threads.

|

pure virtual |

Flush buffered data if any.

This method appends any encoded data to buffer.

| [out] | buffer | Buffer for appending data. The buffer contents is not modified on error. |

|

pure virtual |

Return output audio format.

Method returns AudioFormat that describes encoded output.

AudioFormat describes the encoded audio stream.

|

pure virtual |

AVS format name for encoded audio.

Method returns string interpretation of the output format, that AVS cloud service can recognize.

|

pure virtual |

The maximum number of sample can be processed at the same time. In other words, this will limit input stream buffering. Thus number of samples of processSamples() call will never exceeds this limit.

|

pure virtual |

Provide maximum output frame size.

The method provides an estimate for an output frame size in bytes. This value is used to allocate necessary buffer space in output audio stream.

|

pure virtual |

Pre-initialize the encoder.

Pre-initialization before actual encoding session has began. Note that this function will be called everytime before new encoding session is starting.

| [in] | inputFormat | The AudioFormat describes the audio format of the future incoming PCM frames. |

|

pure virtual |

Encode a block of audio.

This method encodes a block of input audio samples. The samples are provided in a form of byte array restricted by begin and end iterator arguments. The input bytes must represent complete samples, and each sample size is be known via AudioFormat used with init() call. If requiresFullyRead() returns true, the number of samples must be equal to getInputFrameSize(). If requiresFullyRead() returns false, the number of samples must be greater then zero and not more than getInputFrameSize().

This method can be called any number of times after start() has been called.

| [in] | begin | Bytes iterator for the first byte of samples. This parameter must be less then end, or the method will fail with an error. |

| [in] | end | Bytes iterator for the end of sample array. This parameter must be greater then begin, or the method will fail with an error. |

| [out] | buffer | Output buffer for encoded data. |

|

pure virtual |

Return if input must contain full frame.

Determine whether input stream should be fully buffered with the maximum number of samples provided at getInputFrameSize() function. This value will change the behavior of how processSamples is called during the encoding session. This is useful when backend encoder requires fixed length of input samples.

In case the encoding session has been shutdown before the buffer is filled fully, this will cause any partial data to be discarded (e.g. AudioEncoderInterface::stopEncoding() has been called, or reaches the end of the data stream).

|

pure virtual |

Start the encoding session.

This function starts a new encoding session after a call to init(). If block audio encoder produces some data, it can be returned through preamble buffer.

After the session is started, user can call processSamples() until session is closed with close() call.

| [out] | preamble | Destination buffer for encoding preamble output. The method will append preamble bytes to preamble if needed. On error preamble is not modified. |

AlexaClientSDK 3.0.0 - Copyright 2016-2022 Amazon.com, Inc. or its affiliates. All Rights Reserved. Licensed under the Apache License, Version 2.0